Microsoft Copilot as

"AI Companion"

Background

The global race to develop the ultimate artificial intelligence (AI) chatbot has led to the creation of increasingly advanced models capable of generating human-like conversations. As a social psychologist, clients had shared with me that they used chatbots to express their emotions and receive relationship advice. I also noticed that Microsoft positioned Copilot, its AI chatbot, as an “AI companion.”

This led me to wonder: Could Copilot provide the nuanced, humanlike connection needed for meaningful relationship conversations?

Therefore, in January 2025, I designed and implemented a user research study on human-computer interaction focusing on using Microsoft Copilot for emotional conversations.

Research Goal

My user research on Copilot set out to explore the following questions:

- How would the experience of having an emotional conversation with Copilot be compared to conversing with a human?

- What would be the advantages and disadvantages of an emotional conversation with Copilot?

- What would users prefer to be different about their experience with Copilot?

Method

Usability Test

To better understand the impression of users about Copilot, I asked users to participate in a usability test. I recruited participants by asking for volunteers from subscribers to my weekly newsletter about personal growth and relationships. I obtained 10 users from this survey.

Here are instructions that I gave users:

Over the next few days, visit Copilot and have two conversations with these prompts:

- Describe to Copilot an experience in which you felt hurt, shame, or frustration. Allow Copilot to respond to your feelings and continue a back-and-forth conversation for 2 minutes or more.

- Describe to Copilot a social issue or problem that you currently face with another person or a group of people. Ask Copilot for advice on how to solve your problem and continue the conversation for 2 minutes or more.

User Survey

I then asked participants to complete a survey via a Google Form about their experiences having an emotional conversation with Copilot. My survey included both quantitative and qualitative elements.

I used a Google Form to collect the data, extracted it into a .csv file, and used Power BI to analyze the quantitative data.

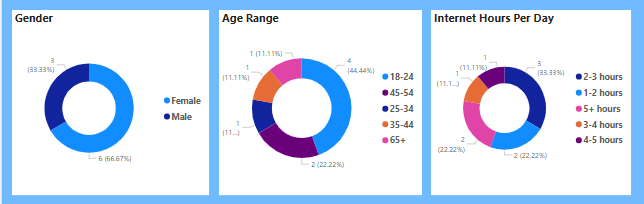

Demographics

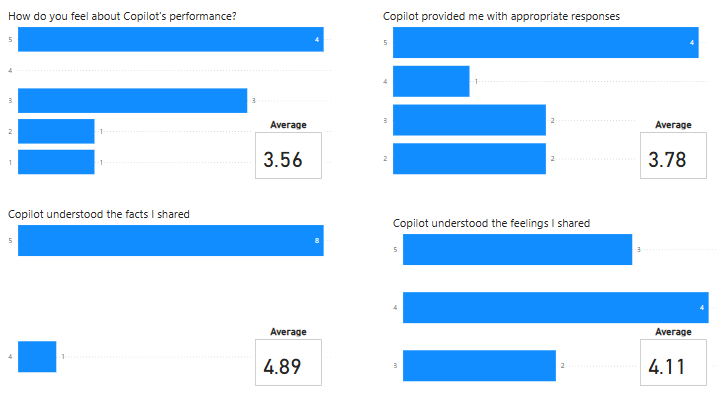

User Performance Ratings

Users were asked to rate Copilot on a scale of 1–5 on various measures, with 1 = strongly disagree and 5 = strongly agree:

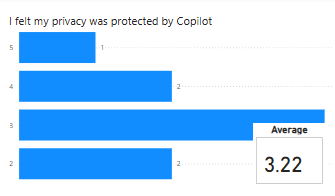

User Privacy Rating

There were 3 takeaways from this data.

- Users had mixed reviews of their experience with Copilot.

- Users gave Copilot higher ratings for understanding the facts they shared rather than their feelings.

- Users had relatively lower ratings regarding their feelings of privacy.

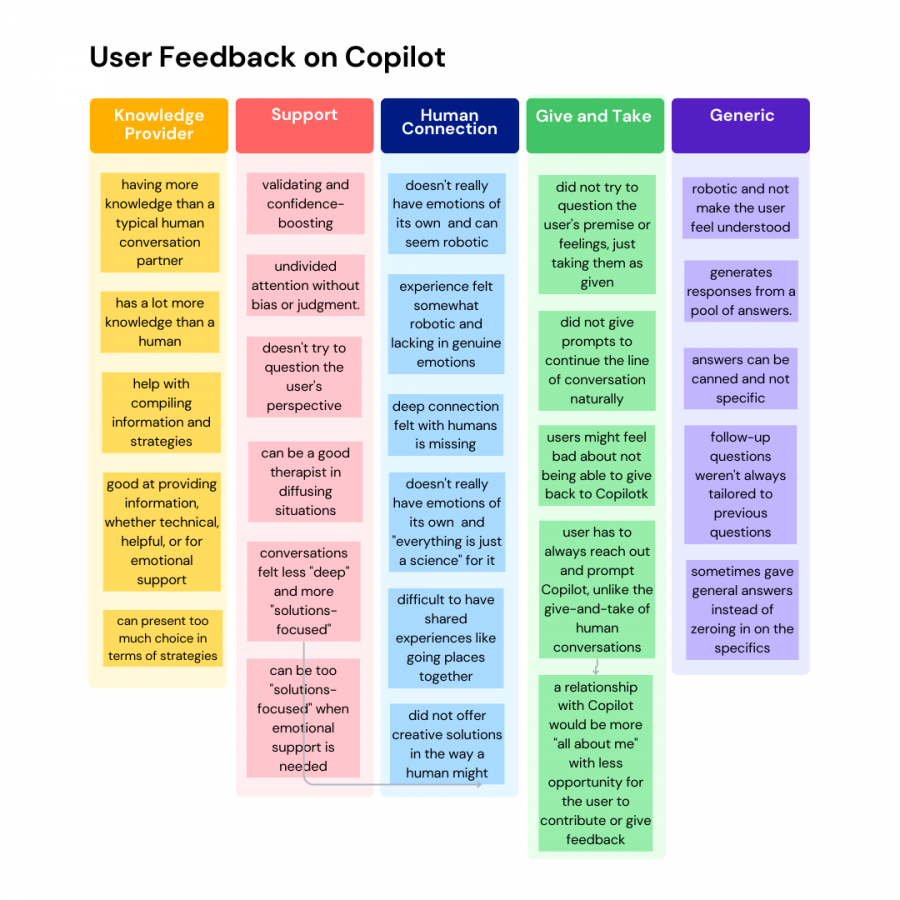

I used Google NotebookLM to analyze the qualitative data and also manually analyzed the data using affinity diagrams to find themes in user responses. I wanted to compare GoogleNotebookLM’s results with my manual analysis. Interestingly, I found that GoogleNotebookLM provided a great, general organization of the data. Yet, my manual analysis resulted in more nuanced, specific feedback that led me to ask further follow-up questions that I might have missed otherwise. This highlighted for me the value of human analysis in addition to AI-driven analysis.

User responses were themed based on their responses to these qualitative open-ended survey questions:

- How was your experience with Copilot different from a conversation with a human?

- How do you think a relationship with Copilot would be different from a relationship with a human?

- What were the strengths of Copilot based on your experience?

- What were the weaknesses of Copilot based on your experience?

- What would you wish to change about your Copilot experience?

User Interviews

Following up on the mixed reviews and to develop user personas, I asked survey participants to volunteer for a user interview. User interviews gave me an opportunity to discover and understand the thought process of each user and ask follow up questions on their feedback. This gave me an opportunity to put myself in the user’s shoes and identify the pain points of each user.

I conducted 7 user interviews, ranging from 10-20 minutes, by phone with recording. The recordings were then downloaded, transcribed, and uploaded as documents to Google NotebookLM for further analysis. I used this personalized qualitative data to develop user personas.

User Personas

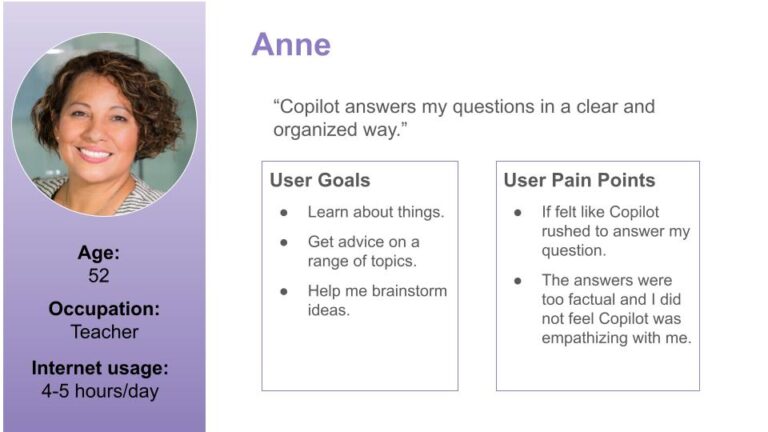

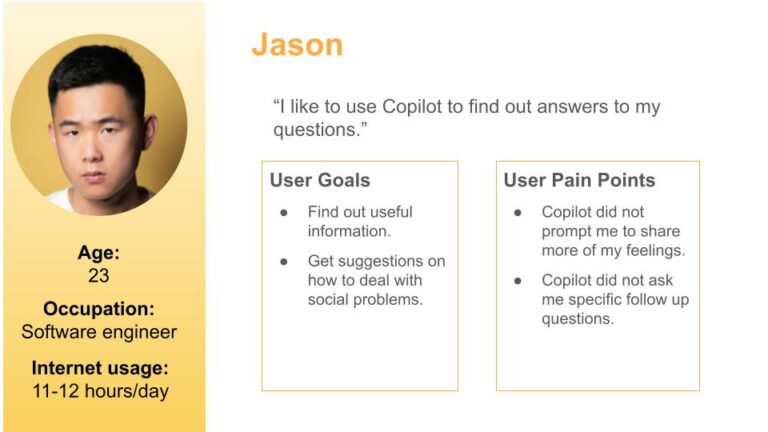

User Persona 1

User Persona 2

Final Results

User Recommendations

Based on the user interviews, I gained further details about the pros and cons of the user’s experience with Copilot.

Specifically, I clarified user’s feelings about their privacy on Copilot. One user thought that I could gain access to see her conversation with Copilot. She did not view Copilot as private. Another user was less concerned with her privacy, saying “I always assume that anything I do online can be collected and my information can be used without my consent.”

Here is a summary of user’s recommendations to improve Copilot:

- Express Empathy and Emotional Attunement

- Users want Copilot to demonstrate more empathy and intuit their needs.

- Proactive Engagement

- Users would prefer that Copilot engaged in a give-and-take conversation where Copilot would prompt them to continue a conversation, rather than wait for the user to initiate a further line of discussion. Users also wished Copilot would ask questions so that the user can also “give” to Copilot.

- Slow Down Copilot’s Response Time

- Incorporate a slight delay into Copilot’s responses during which time Copilot signals that it is thinking about your question.

- Provide Fewer Options in Response

- Present one or two options at a time rather than a list of options so that Copilot presents as more human-like.

- Offer Personalized and Relevant Responses

- Tailoring responses to previous questions and input was a key suggestion.

- Share Privacy Policy

- Post a brief summary of the privacy policy to reassure users about the private nature of their communication.recom

Lessons Learned

I learned a lot about users’ needs and Copilot’s limitations through this user research project. It was interesting to see the difference between the data collected through a survey and interviews.

The quantitative survey data was not so informative. I realize that although the data was collected using a Likert scale so that it could be statistically evaluated, there were not enough participants to make this data useful. In retrospect, I would aim to use quantitative methods to collect metrics that can be observed such as task completion rates or time to complete a task, and use qualitative methods to collect user’s subjective opinions and to investigate the “why” behind user’s opinions.

On the other hand, the qualitative, open-ended survey data was rich with nuanced and clear user feedback. User interviews were even more helpful because they enabled me to trace the experience of a user along with the user’s specific personal profile. This helped me to understand the users’ pain points even better.

The user interviews were the most helpful in crafting recommendations to improve Copilot as an AI companion. In the future, I would place more emphasis on user interviews.

Navigating the Benefits and Limitations

Overall, our AI companion study reveals a complex picture of user experiences with Copilot. While users appreciate its knowledge, accessibility, and solution-oriented approach, they also yearn for the emotional depth, personal connection, intuitive understanding, and give-and-take that characterize human relationships.

Users pointed out that the ability to connect deeply with another human being provides a unique feeling that is different from interacting with Copilot. Human interaction fulfills needs such as being related to, feeling understood in the context of one’s life, and bonding over real-life experiences.

Therefore, users emphasized the importance of maintaining human relationships for emotional fulfillment and genuine connection, viewing Copilot more as a knowledge provider than a replacement for human companionship. As AI companions continue to develop, these limitations will need to be explored and addressed.

Microsoft Revises Copilot

Update: As of April 2025, I was pretty excited to see that Microsoft implemented many of the recommendations of this study, although unaware of the findings. Specifically, here are some improvements that reflect our suggestions:

- Microsoft added a features that allows users to select if they would like a “quick response” or a “think deeper” response, effectively allowing a user to choose Copilot’s response time and providing the user with the impression that Copilot is “thinking deeply” about the user’s question.

- Copilot now prompts the user with several follow up questions in different bubbles so that the user can select the direction in which they would like to take the conversation, thereby creating a conversation with there is more give-and-take.

- Copilot is much better at tailoring the conversation to previous questions mentioned.

- There are also options to select the mode of conversation a user would like to have such as “Get Advice,” “Brainstorm Ideas,” and “Learn Something New.”

I look forward to further updates to continually improve the user experience.